Assessing community disaster resilience using a balanced scorecard: lessons learnt from three Australian communities

Imogen Ramsey, Malinda Steenkamp, Andrea Thompson, Olga Anikeeva, Paul Arbon, Kristine Gebbie

Peer-reviewed Article

Abstract

Article

Background

In December 2009, the Council of Australian Governments (COAG) adopted a national resilience-based approach to disaster management, recognising that a cooperative effort is required to strengthen the local capacity and capability of Australian communities to withstand and recover from disaster events (Australian Government 2011). The National Strategy for Disaster Resilience (NSDR) was established to support the development of disaster resilience, and sets out how the nation should strengthen partnerships, improve understanding of the risk environment, and build adaptive and empowered communities. In recent years Australian governments, organisations and communities have collaborated on reforming emergency management approaches to develop and embed the goals of community disaster resilience.

The TRI supported the NSDR through research that clarified the definition of community disaster resilience. According to Arbon (2014), ‘community resilience is a process of continuous engagement that builds preparedness prior to a disaster and allows for a healthy recovery afterwards’ (p. 12). In recent years, various organisations have developed measurement frameworks for disaster resilience (Building Resilient Regions 2010, Cutter et al. 2008a, 2008b, Emergency Volunteering 2011, Longstaff et al. 2010, Renschler et al. 2010, UNDP Drylands Development Centre 2013), although few have been designed specifically for use by communities (Arbon et al. 2014). A detailed discussion about these tools has been published in a review by the United Nations Development Programme (Winderl 2014). In 2012, with assistance from communities, the TRI developed the Community Disaster Resilience Scorecard and Toolkit: a balanced tool for communities to assess their disaster resilience using a participatory methodology (Arbon et al. 2012). The Toolkit defines a resilient community as one where members are connected and able to work together in the event of an emergency in order to:

- function and sustain critical systems, even under stress

- adapt to changes in the physical, social or economic environments

- be self-reliant if external resources are limited or cut off

- learn from experience to improve over time.

Source: TRI Toolkit at www.flinders.edu.au/tri.

The Community Disaster Resilience Scorecard and Toolkit was trialled by four Australian communities in 2012. The findings showed that the Scorecard helped communities to better foresee threats and risks, engage with emergency management agencies, acquire a sense of community and social capital, and take collective responsibility to reduce the socio-economic impact of disruptive challenges and disasters (Arbon et al. 2012).

In June 2014, the TRI commenced a 12-month evaluation of the Scorecard (Arbon et al. 2015). Three communities (two from Tasmania and one from Victoria) successfully implemented the Scorecard in early 2015 as part of this evaluation.

Method

Recruitment

Representatives from local government associations (NSW, NT, Qld, SA, Tas, Vic and WA) distributed information about the project to prospective councils via circulars. Sixteen communities expressed interest and participated in a teleconference to learn more about the Scorecard and the evaluation project. Two teleconferences were held in July and September 2014.

Follow-up

Of the original 16, three went ahead and implemented the Scorecard.1 About 25 guided telephone interviews and follow-up conversations were conducted. The interviews were based on semi-structured questions but conversations evolved naturally, as led by the interviewees. Correspondence between the TRI and the three participating communities was maintained throughout the project and assistance provided where required. Site visits to two of the communities occurred in February 2015, and the third community was contacted via email and telephone due to the timeframe of implementation.

Results

Some councils reported barriers to implementing the Scorecard. These included a lack of senior management support, a lack of operational support, competing initiatives, insufficient resources, and individual levels of interest. These challenges are discussed in the evaluation report (Arbon et al. 2015). The results from the three communities that implemented the Scorecard are described as case studies.

Case study 1: a comprehensive and inclusive approach

Background

In one Tasmanian municipality interest in the Scorecard originated with the emergency management (EM) coordinator, supported by the EM committee and the mayor. The local government area included residential and rural areas, a larger, predominantly urban district and several small surrounding towns; some with large transient populations. The council’s EM structure had recently converted to a community-based group but still included expert input from agencies. The council’s EM sector had a strong community-resilience focus and recognised the importance of macro- and micro-level practice to deliver effective response and recovery actions.

Process

Six representatives from the EM committee agreed to use the Scorecard within their respective areas and with support from council. It was proposed that once the individual exercises were completed, the central council would collate the separate community exercises to produce an overall municipal rating.

An initial meeting was held with the EM committee, representatives from the selected communities and project staff from the TRI. The Scorecard was met with a generally positive response from the committee, with more than one member commenting on its potential long-term value. However, a few members voiced concerns about their ability to initiate and manage the process. The representatives decided they would be more comfortable with a facilitator overseeing the process in each community to ensure consistency.

Council officers identified and invited members in each community to form working groups. The response rate was high, with most invited members agreeing to be involved. An experienced facilitator was appointed and consulted. Following this, the group decided to trial the exercise in one community with the support and oversight of an EM committee representative. Once the trial and a review of the processes were complete, the council would discuss the next area for consultation.

At the time of writing, the Scorecard has been implemented in three distinctly different communities within the municipality: two small urban areas with homogenous populations and one geographically spread area, characterised by a number of small population pockets. Each community held the recommended three meetings of an explanatory session, a meeting to discuss and allocate scores, and a review of the scores and subsequent recommendations for the council. In each community the facilitator managed the process, the council provided relevant Census data, and the municipal EM coordinator chaired the working group.

Outcomes

The EM coordinator provided written and verbal feedback to the TRI following completion of the Scorecard in the first two communities. Of note was the involvement of people from various community agencies in the process that prompted others to recognise the importance of connectedness and engagement in promoting resilience. According to the EM coordinator, more than ten community members expressed interest in assisting with the development and implementation of recommendations from the Scorecard assessment. It was his view that such enthusiasm should be harnessed in order to achieve more community acceptance of actions and recommendations from government agencies, local government and service providers.

Of the Scorecard approach, he noted that the working groups had recognised the importance of balancing subjective contributions with factual information. A number of participants had gained valuable insight as a result of interpreting relevant Census data.

The council plans to repeat this process for the remaining three communities and to compile a consolidated report of their findings and recommendations at the conclusion of the project. The EM coordinator also proposed scheduling a de-briefing meeting to allow representatives of the working groups to share their experiences and identify strengths and challenges unique to each community. It is anticipated that the Scorecard will have an important role to play in conveying messages to decision-makers. The council is seeking to modify the participatory approach of the exercise to evaluate their capacities and capabilities.

Case study 2: the straightforward approach

Background

A second Tasmanian council was highly proactive in implementing the Scorecard. The council area includes a major town and eight smaller communities, with a total population of approximately 6500 people.

Process

The council team (comprising three staff) attempted to recruit working group members through established formal council processes, including advertising in local media. They received few responses and subsequently used their ‘local insider’ knowledge to directly invite key community members known to be well connected and representative of the local population. This approach was successful and most invited members agreed to participate. The working group consisted of 12 individuals from different communities in the area, and included newly-elected members, the school bus driver, business owners, EM officers and the local priest.

Three meetings were organised as recommended. A central governance approach was adopted, whereby the council assumed a key role in facilitating the meetings and providing demographic and other relevant information. One of the council’s team had sufficient experience and credibility to be accepted as chair. He was aware of diverse views within the working group and ensured that representatives had an equal opportunity to be heard in the discussions.

During the first meeting, members consolidated information about their communities’ demographics and environmental settings. The second meeting consisted of the further compilation of information and the completion of the Scorecard. Although a third meeting had been scheduled to consolidate and plan for a way forward, the working group continued with this part of the exercise on the day of the second meeting.

Outcomes

Through the process of completing the Scorecard, council members agreed that information about EM planning was not known nor understood in the community. For example, there were discrepancies between the scores allocated by EM personnel and those by community members to Scorecard items. It was common for emergency personnel to indicate that an issue had been addressed and allocate a high score, whereas community members were not as confident about the relevant issues, and would often prefer to allocate a lower score. This unexpected finding prompted the council to review its approach to disseminating EM information.

Council members sought advice from the working group members as to how information about planned disaster assistance could be made more accessible to the community, which resulted in several practical solutions. The council prepared information to be incorporated into their new residents information kit, such as relevant telephone numbers, links to specific plans available on the council’s website (e.g. bushfire survival booklet, checklists for leaving early or staying and defending), and Red Cross and emergency alerts. A working group member distributed this information in the community where she resided via a self-funded mail drop.

Engagement with the Scorecard process helped to establish ties between the local government, community leaders and other authorities. This, in turn, facilitated a better understanding of the various roles that these groups play in emergency planning and response and the resources available to the community. This was not well known previously. There are plans for the Scorecard exercise to be repeated in some of the smaller communities.

Case study 3: training community leaders

Background

A rural Victorian municipality with a population of around 8500 people adopted a unique approach to implementing the Scorecard. The Scorecard was to be used within the framework of a resilience leadership program, which ran for six months from November 2014 to May 2015 and involved 22 community members, representing eight townships in the shire. The program formed part of a raft of resilience-based initiatives that the community development team planned to implement over the next few years. It provided opportunities for community members to understand the impact of disaster events on small communities, create strong relationships and networks, and improve their capacity to respond effectively in emergency situations. Key topics included disaster planning, response and recovery cycle, leadership styles, project planning and the roles of emergency services and agencies.

Interest in the Scorecard was led by the community development team leader and supported by a proactive council, which had a central focus on community-action planning and a vision to build empowered and self-sufficient communities. The shire had experienced a 14-year drought, bushfires and minor flooding in recent years, as well as a major disruptive event in one of its communities. This previous disaster experience, combined with a supported local resilience strategy, were key contextual factors that drove interest in, and implementation of, the Scorecard.

The community members participated in the Scorecard exercise in a way that aligned with their own definition of resilience. Across the municipality, good leadership was perceived as being critical to the formation of resilient networks. The leadership program had subsequently been introduced to equip local residents with the knowledge, resources and skills necessary to make their communities truly capable and resilient. By incorporating the Scorecard exercise into their leadership program, the community members took ownership of the resilience-building process.

Process

An invitation to community members to attend an information session about the resilience leadership program was advertised in the local newsletter. The preliminary session with the interested volunteers was held to define resilience in their local context and discuss the inherent characteristics of resilient communities. A total of 24 representatives from ten small communities participated in the program and used the Scorecard to benchmark individual communities and develop resilience profiles. The exercise was undertaken individually but volunteers could work together to answer questions. The program was overseen by a facilitator, who also chaired a feedback session after its completion.

Outcomes

Feedback from volunteers was that the Scorecard was at too high a level with some of the industry language not relatable or well understood, and that it assumed that the population was homogenous. The volunteers also did not know where to find information on procedures that support community disaster PPR, or the required statistical data. Despite these challenges, the volunteers understood the value of the tool and felt it could be adapted for easier community use.

The community development team acknowledged they did not spend a lot of time with the volunteers to prepare them to use the Scorecard. Individuals did not complete the exercise with the support of the group or facilitators, nor as part of a well-prepared workshop. The team was aware there would be some difficulties associated with this approach but wanted to trial the Scorecard initially and share feedback.

The way forward identified by the leaders was to use their assessments and develop action plans to implement. They also produced a detailed document with advice, comments and recommendations for improving the Scorecard.

Discussion

The case studies demonstrate that implementing the Scorecard is a valuable exercise for community engagement as well as building resilience. Despite each of the three councils adopting a unique approach to implementing the Scorecard, some key insights about the process are transferable.

- The working group is a powerful conduit for community engagement, community insight (for council) and multi-directional communication.

- Many working group members emerge as willing participants in ongoing community resilience initiatives, but require further direction and mandates from council.

- Formation of the working group can be difficult, particularly when dealing with sections of the community that do not usually engage with councils (e.g. new residents or day commuters).

- The working group chair has an important role in managing the process. It is the chair’s responsibility to ensure that all members have equal opportunity to participate in answering and scoring the questions, and that experts do not dominate the discussion.

- The Scorecard assists councils to better understand community members’ perceptions of risk, as well as the role and responsibilities of different agencies during disruptive events. It also allows non-council working group members to learn more about the role of council.

- The process of implementing the Scorecard can deliver practical secondary outputs in the short-term. The case studies led to improved information dissemination to community members and revision of disaster management plans.

Overall, it was observed that successful implementation of the Scorecard occurred where there was alignment of senior management support with initiative at operational level. The three case studies are examples of where this alignment occurred. Local, state and national contexts are critical factors that influence the interest in, and uptake of, the Scorecard. An existing resilience agenda, strong EM focus and vulnerability to disaster were key contextual factors that sparked interest in the Scorecard, while the availability of resources, funding and structural support served as an impetus for action.

The case studies demonstrate that the Scorecard can be used successfully in different ways, in different contexts and for various purposes. It is important that a community assumes ownership of the Scorecard exercise by pre-identifying desired outcomes and undertaking the process in a way that is considerate of the unique concerns and needs of its members. It is also important that the Scorecard working group is representative of the whole community as far as possible. Having diverse perspectives expressed in the process was found to strengthen outcomes.

Conclusion

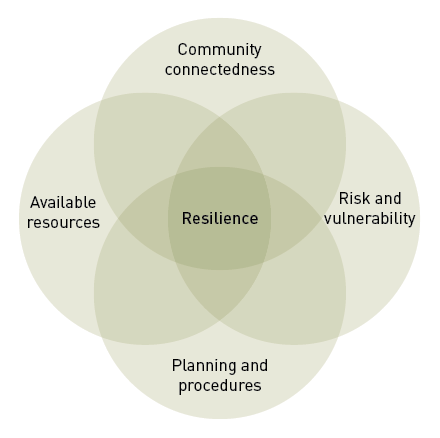

The Scorecard addresses key components of resilience based on elements of physical, organisational and social capital, which all communities possess to varying degrees. The Scorecard exercise can identify strengths and weaknesses, and provides a point-in-time snapshot of resilience for communities. The case studies highlight the community development potential of the Scorecard process, which provides a useful framework for community cohesion.

The Scorecard is an avenue for the EM sector, local councils and community-based groups to connect to address gaps in resilience. The case studies provide insight into aspects of the Scorecard process that facilitate resilience-building, and demonstrate that outcomes and experiences will vary across communities. Further testing of the Scorecard will consolidate recommendations and investigate whether they are applicable to other state and national contexts.

The project findings suggest that effective implementation of the Community Disaster Resilience Scorecard can support the development of programs and the allocation of funds. This is an effective way to build community resilience and to reduce the socio-economic impact of future disruptive events, emergencies and disasters.

|

Acknowledgements The authors acknowledge the assistance of volunteers who provided input to this project. Thanks is extended to the members of the Project Reference Group for their support and guidance. Funding for this project was gratefully received from the Australian Government National Emergency Management Program. |

References

Australian Government 2011, National Strategy for Disaster Resilience, Attorney General’s Department, Barton ACT, Australia.

Arbon P 2014, Developing a model and tool to measure community disaster resilience, Australian Journal of Emergency Management, vol. 29, no. 4, pp. 12–16.

Arbon P, Gebbie K, Cusack L, Perera S & Verdonk S 2012, Developing a model and tool to measure community disaster resilience, report prepared by the Torrens Resilience Institute, Flinders University, Adelaide.

Arbon P, Steenkamp M, Thompson A, Ramsey I, Gebbie K, Cusack L & Anikeeva O 2015, Implementation and evaluation of the Community Disaster Resilience Toolkit and Scorecard, report prepared by the Torrens Resilience Institute, Flinders University, Adelaide. At: www.flinders.edu.au/tri/toolkits.

Building Resilient Regions 2011, Resilience capacity index, University of California Berkeley. At: http://brr.berkeley.edu/rci/.

Cutter S, Barnes L, Berry M, Burton C, Evans E, Tate E & Webb J 2008a, A place-based model for understanding community resilience to natural disasters, Global Environmental Change, vol. 18, no. 4, pp. 598-606.

Cutter S, Barnes L, Berry M, Burton C, Evans E, Tate E & Webb J 2008b, Community and Regional Resilience: Perspectives from Hazards, Disasters, and Emergency Management, Hazards and Vulnerability Research Institute, University of South Carolina, CARRI Research Report. At: www.resilientus.org/wp-content/uploads/2013/03/FINAL_CUTTER_9-25-08_1223482309.pdf.

Emergency Volunteering 2011, Disaster Readiness Index. Volunteering Queensland. & Emergency Management Queensland. At: www.emergencyvolunteering.com.au/qld/disasterready/dri.

Longstaff PH, Armstrong NJ, Perrin K, Parker WM & Hidek MA 2010, Building resilient communities: a preliminary framework for assessment. Homeland Security Affairs, vol. 6, no. 3, pp. 1-23.

Renschler C, Frazier A, Arendt L, Cimellaro G, Reinhorn A & Bruneau M 2010, Framework for defining and measuring resilience at the community scale: The People’s Resilience Framework, Technical Report MCEER-10-0006. At: www.mceer.buffalo.edu/pdf/report/10-0006.pdf.

Torrens Resilience Institute 2012, The Community Disaster Resilience Toolkit and Scorecard. At: www.flinders.edu.au/tri.

UNDP Drylands Development Centre 2013, Community based resilience analysis (CoBRA): Conceptual framework and methodology. At: www.seachangecop.org/node/1788.

Winderl T 2014, Disaster resilience measurements: Stocktaking of ongoing efforts in developing systems for measuring resilience. United Nations Development Programme. At: www.preventionweb.net/files/37916_disasterresiliencemeasurementsundpt.pdf.

About the authors

Imogen Ramsey is a Research Assistant at the Torrens Resilience Institute, Flinders University. She holds an Honours degree in psychology and is proficient in data analysis and interpretation. She worked closely with Dr Steenkamp on the evaluation of the Scorecard and liaised with community representatives throughout the process.

Dr Malinda Steenkamp is a Postdoctoral Research Fellow at the Torrens Resilience Institute with more than 20 years of research experience in epidemiology and population health. She has extensive knowledge of research project management and community resilience, and took the lead on the evaluation of the Scorecard.

Andrea Thompson is a Project Manager at the Torrens Resilience Institute. She is completing a Masters degree in social work focused on community development and has postgraduate qualifications in urban and regional planning. Andrea has worked in state and local government for many years in the area of development strategy and planning policy.

Dr Olga Anikeeva is a Postdoctoral Research Fellow at the Torrens Resilience Institute with a research background in epidemiology and public health. In her role she is actively involved in study design, grant writing, data analysis and publication and dissemination of findings.

Professor Paul Arbon is the Director of the Torrens Resilience Institute and Dean of the School of Nursing and Midwifery at Flinders University. Developing research in pre-hospital care, mass gathering medicine and disaster health have been consistent focus areas throughout Prof Arbon’s career. He led the development of the Scorecard in 2012 and its evaluation in 2014.

Professor Kristine Gebbie is an Associate Director at the Torrens Resilience Institute and was involved in the development and evaluation of the Scorecard in 2012. For the past 15 years she has conducted research and taught in areas related to complex emergency and disaster preparedness, response and recovery issues.

TRI Community Resilience Scorecard items

1. How connected are the members of your community?

1.1 What proportion of your population is engaged with organisations (e.g., clubs, service groups, sports teams, churches, and library)?

1.2 Do members of the community have access to a range of communication methods to gather and share information during times of emergency?

1.3 What is the level of communication between local governing body and population?

1.4 What is the general relationship of your community with the larger region or rest of the Shire?

1.5 What is the degree of connectedness across community groups? (e.g. ethnicities/sub-cultures/age groups/ new residents not in your community when last disaster happened)

2. What is the level of risk and vulnerability in your community?

2.1 What are the known risks of all identified hazards in your community?

2.2 What are the trends in relative size of the permanent resident population and the daily population?

2.3 What is the rate of the resident population change in the last 5 years?

2.4 What proportion of the population has the capacity to independently move to safety? (e.g., non- institutionalised, mobile with own vehicle, adult)

2.5 What proportion of the resident population prefers communication in a language other than English?

2.6 Has the transient population (e.g., tourists, transient workers) been included in planning for response and recovery?

2.7 What is the risk that your community could be isolated during an emergency event?

3. What procedures support community disaster planning, response and recovery?

3.1 To what extent and level are households within the community engaged in planning for disaster response and recovery?

3.2 Are there planned activities to reach the entire community about all-hazards resilience?

3.3 Does the community actually meet requirements for disaster readiness (informed public, communication plans, regular drills or exercises, etc.)?

3.4 Do post-disaster event assessments change expectations or plans?

4. What emergency planning, response and recovery resources are available in your community?

4.1 How comprehensive is the local infrastructure emergency protection plan? (e.g., water supply, sewerage, power system)

4.2 What proportion of population with skills useful in emergency response/ recovery (e.g., first aid, safe food handling) can be mobilised if needed?

4.3 To what extent are all educational institutions (public/private schools, all levels including early child care) engaged in emergency preparedness education?

4.4 How are available medical and public health services included in emergency planning?

4.5 Are readily accessible locations available as evacuation or recovery centres (e.g., school halls, community or shopping centres, post office) and included in resilience strategy?

4.6 What is the level of food/water/fuel readily availability in the community?

Footnotes

1 Valuable insights were also gained from interested communities that did not implement the Scorecard. Detailed findings about all sixteen communities (including those who did not implement the Scorecard) are presented in the complete project evaluation report (Arbon et al. 2015).